How Ascension Health Is Personalizing Healthcare

Rajan Mohan blends brand, digital and AI to simplify patient journeys and personalize care at Ascension Health, one of the nation’s largest faith based health systems.

Rajan Mohan blends brand, digital and AI to simplify patient journeys and personalize care at Ascension Health, one of the nation’s largest faith based health systems.

Stop fumbling for cables in the dark. These WIRED-tested stands and pads will take the hassle out of refueling your phone, wireless earbuds, and watch.

Jonathan Stempel / Reuters : A federal judge issues a preliminary injunction blocking Virginia from enforcing a new law restricting children's social media use, on First Amendment grounds — A federal judge on Friday blocked Virginia from enforcing a new law that aimed to protect children from being addicted to social media …

Q Hayashida’s blend of fantasy, sci‑fi, and dark humor deserves far more recognition.

For the past year, the enterprise AI community has been locked in a debate about how much freedom to give AI agents. Too little, and you get expensive workflow automation that barely justifies the "agent" label. Too much, and you get the kind of data-wiping disasters that plagued early adopters of tools like OpenClaw. This week, Google Labs released an update to Opal , its no-code visual agent builder, that quietly lands on an answer — and it carries lessons that every IT leader planning an agent strategy should study carefully. The update introduces what Google calls an "agent step" that transforms Opal's previously static, drag-and-drop workflows into dynamic, interactive experiences. Instead of manually specifying which model or tool to call and in what order, builders can now define a goal and let the agent determine the best path to reach it — selecting tools, triggering models like Gemini 3 Flash or Veo for video generation, and even initiating conversations with users when it needs more information. It sounds like a modest product update. It is not. What Google has shipped is a working reference architecture for the three capabilities that will define enterprise agents in 2026: Adaptive routing Persistent memory Human-in-the-loop orchestration ...and it's all made possible by the rapidly improving reasoning abilities of frontier models like the Gemini 3 series . The 'off the rails' inflection point: Why better models change everything about agent design To understand why the Opal update matters, you need to understand a shift that has been building across the agent ecosystem for months. The first wave of enterprise agent frameworks — tools like the early versions of CrewAI and the initial releases of LangGraph — were defined by a tension between autonomy and control. Early models simply were not reliable enough to be trusted with open-ended decision-making. The result was what practitioners began calling "agents on rails": tightly constrained workflows where every decision point, every tool call, and every branching path had to be pre-defined by a human developer. This approach worked, but it was limited. Building an agent on rails meant anticipating every possible state the system might encounter — a combinatorial nightmare for anything beyond simple, linear tasks. Worse, it meant that agents could not adapt to novel situations, the very capability that makes agentic AI valuable in the first place. The Gemini 3 series, along with recent releases like Anthropic's Claude Opus 4.6 and Sonnet 4.6, represents a threshold where models have become reliable enough at planning, reasoning, and self-correction that the rails can start coming off. Google's own Opal update is an acknowledgment of this shift. The new agent step does not require builders to pre-define every path through a workflow. Instead, it trusts the underlying model to evaluate the user's goal, assess available tools, and determine the optimal sequence of actions dynamically. This is the same pattern that made Claude Code's agentic workflows and tool calling viable: the models are good enough to decide the agent’s next step and often even to self-correct without a human manually re-prompting every error. The difference compared to Claude Code is that Google is now packaging this capability into a consumer-grade, no-code product — a strong signal that the underlying technology has matured past the experimental phase. For enterprise teams, the implication is direct: if you are still designing agent architectures that require pre-defined paths for every contingency, you are likely over-engineering. The new generation of models supports a design pattern where you define goals and constraints, provide tools, and let the model handle routing — a shift from programming agents to managing them. Memory across sessions: The feature that separates demos from production agents The second major addition in the Opal update is persistent memory. Google now allows Opals to remember information across sessions — user preferences, prior interactions, accumulated context — making agents that improve with use rather than starting from zero each time. Google has not disclosed the technical implementation behind Opal's memory system. But the pattern itself is well-established in the agent-building community. Tools like OpenClaw handle memory primarily through markdown and JSON files, a simple approach that works well for single-user systems. Enterprise deployments face a harder problem: maintaining memory across multiple users, sessions, and security boundaries without leaking sensitive context between them. This single-user versus multi-user memory divide is one of the most under-discussed challenges in enterprise agent deployment. A personal coding assistant that remembers your project structure is fundamentally different from a customer-facing agent that must maintain separate memory states for thousands of concurrent users while complying with data retention policies. What the Opal update signals is that Google considers memory a core feature of agent architecture, not an optional add-on. For IT decision-makers evaluating agent platforms, this should inform procurement criteria. An agent framework without a clear memory strategy is a framework that will produce impressive demos but struggle in production, where the value of an agent compounds over repeated interactions with the same users and datasets. Human-in-the-loop is not a fallback — it is a design pattern The third pillar of the Opal update is what Google calls "interactive chat" — the ability for an agent to pause execution, ask the user a follow-up question, gather missing information, or present choices before proceeding. In agent architecture terminology, this is human-in-the-loop orchestration, and its inclusion in a consumer product is telling. The most effective agents in production today are not fully autonomous. They are systems that know when they have reached the limits of their confidence and can gracefully hand control back to a human. This is the pattern that separates reliable enterprise agents from the kind of runaway autonomous systems that have generated cautionary tales across the industry. In frameworks like LangGraph, human-in-the-loop has traditionally been implemented as an explicit node in the graph — a hard-coded checkpoint where execution pauses for human review. Opal's approach is more fluid: the agent itself decides when it needs human input based on the quality and completeness of the information it has. This is a more natural interaction pattern and one that scales better, because it does not require the builder to predict in advance exactly where human intervention will be needed. For enterprise architects, the lesson is that human-in-the-loop should not just be treated as a safety net bolted on after the agent is built. It should be a first-class capability of the agent framework itself — one that the model can invoke dynamically based on its own assessment of uncertainty. Dynamic routing: Letting the model decide the path The final significant feature is dynamic routing, where builders can define multiple paths through a workflow and let the agent select the appropriate one based on custom criteria. Google's example is an executive briefing agent that takes different paths depending on whether the user is meeting with a new or existing client — searching the web for background information in one case, reviewing internal meeting notes in the other. This is conceptually similar to the conditional branching that LangGraph and similar frameworks have supported for some time. But Opal's implementation lowers the barrier dramatically by allowing builders to describe routing criteria in natural language rather than code. The model interprets the criteria and makes the routing decision, rather than requiring a developer to write explicit conditional logic. The enterprise implication is significant. Dynamic routing powered by natural language criteria means that business analysts and domain experts — not just developers — can define complex agent behaviors. This shifts agent development from a purely engineering discipline to one where domain knowledge becomes the primary bottleneck, a change that could dramatically accelerate adoption across non-technical business units. What Google is really building: An agent intelligence layer Stepping back from individual features, the broader pattern in the Opal update is that Google is building an intelligence layer that sits between the user's intent and the execution of complex, multi-step tasks. Building on lessons from an internal agent SDK called “ Breadboard ”, the agent step is not just another node in a workflow — it is an orchestration layer that can recruit models, invoke tools, manage memory, route dynamically, and interact with humans, all driven by the ever improving reasoning capabilities of the underlying Gemini models. This is the same architectural pattern emerging across the industry. Anthropic's Claude Code, with its ability to autonomously manage coding tasks overnight, relies on similar principles: a capable model, access to tools, persistent context, and feedback loops that allow self-correction. The Ralph Wiggum plugin formalized the insight that models can be pressed through their own failures to arrive at correct solutions — a brute-force version of the self-correction that Opal now packages some of that into a polished consumer experience. For enterprise teams, the takeaway is that agent architecture is converging on a common set of primitives: goal-directed planning, tool use, persistent memory, dynamic routing, and human-in-the-loop orchestration. The differentiator will not be which primitives you implement, but how well you integrate them — and how effectively you leverage the improving capabilities of frontier models to reduce the amount of manual configuration required. The practical playbook for enterprise agent builders Google shipping these capabilities in a free, consumer-facing product sends a clear message: the foundational patterns for building effective AI agents are no longer cutting-edge research. They are productized. Enterprise teams that have been waiting for the technology to mature now have a reference implementation they can study, test, and learn from — at zero cost. The practical steps are straightforward. First, evaluate whether your current agent architectures are over-constrained. If every decision point requires hard-coded logic, you are likely not leveraging the planning capabilities of current frontier models. Second, prioritize memory as a core architectural component, not an afterthought. Third, design human-in-the-loop as a dynamic capability the agent can invoke, rather than a fixed checkpoint in a workflow. And fourth, explore natural language routing as a way to bring domain experts into the agent design process. Opal itself probably won’t become the platform enterprises adopt. But the design patterns it embodies — adaptive, memory-rich, human-aware agents powered by frontier models — are the patterns that will define the next generation of enterprise AI. Google has shown its hand. The question for IT leaders is whether they are paying attention.

Actions by the president and the Pentagon appeared to drive a wedge between Washington and the tech industry, whose leaders and workers spoke out for the start-up.

The landscape of enterprise artificial intelligence shifted fundamentally today as OpenAI announced $110 billion in new funding from three of tech's largest firms: $30 billion from SoftBank, $30 billion from Nvidia, and $50 billion from Amazon. But while the former two players are providing money, OpenAI is going further with Amazon in a new direction, establishing an upcoming fully "Stateful Runtime Environment" on Amazon Web Services (AWS), the world's most used cloud environment. This signals OpenAI's and Amazon's vision of the next phase of the AI economy — moving from chatbots to autonomous "AI coworkers" known as agents — and that this evolution requires a different architectural foundation than the one that built GPT-4. For enterprise decision-makers, this announcement isn’t just a headline about massive capital; it is a technical roadmap for where the next generation of agentic intelligence will live and breathe. And especially for those enterprises currently using AWS, it's great news, giving them more options with a new runtime environment from OpenAI coming soon (the companies have yet to announce a precise timeline for when it will arrive). The great divide between 'stateless' and 'stateful' At the heart of the new OpenAI-Amazon partnership is a technical distinction that will define developer workflows for the next decade: the difference between "stateless" and "stateful" environments. To date, most developers have interacted with OpenAI through stateless APIs. In a stateless model, every request is an isolated event; the model has no "memory" of previous interactions unless the developer manually feeds the entire conversation history back into the prompt. OpenAI's prior cloud partner and major investor, Microsoft Azure, remains the exclusive third-party cloud provider for these stateless APIs. The newly announced Stateful Runtime Environment, by contrast, will be hosted on Amazon Bedrock — a paradigm shift. This environment allows models to maintain persistent context, memory, and identity. Rather than a series of disconnected calls, the stateful environment enables "AI coworkers" to handle ongoing projects, remember prior work, and move seamlessly across different software tools and data sources. As OpenAI notes on its website : "Now, instead of manually stitching together disconnected requests to make things work, your agents automatically execute complex steps with 'working context' that carries forward memory/history, tool and workflow state, environment use, and identity/permission boundaries." For builders of complex agents, this reduces the "plumbing" required to maintain context, as the infrastructure itself now handles the persistent state of the agent. OpenAI Frontier and the AWS Integration The vehicle for this stateful intelligence is OpenAI Frontier, an end-to-end platform designed to help enterprises build, deploy, and manage teams of AI agents, launched back in early February 2026 . Frontier is positioned as a solution to the "AI opportunity gap"—the disconnect between model capabilities and the ability of a business to actually put them into production. Key features of the Frontier platform include: Shared Business Context: Connecting siloed data from CRMs, ticketing tools, and internal databases into a single semantic layer. Agent Execution Environment: A dependable space where agents can run code, use computer tools, and solve real-world problems. Built-in Governance: Every AI agent has a unique identity with explicit permissions and boundaries, allowing for use in regulated environments. While the Frontier application itself will continue to be hosted on Microsoft Azure, AWS has been named the exclusive third-party cloud distribution provider for the platform. This means that while the "engine" may sit on Azure, AWS customers will be able to access and manage these agentic workloads directly through Amazon Bedrock, integrated with AWS’s existing infrastructure services. OpenAI opens the door to enterprises: how to register your interest in its upcoming new Stateful Runtime Environment on AWS For now, OpenAI has launched a dedicated Enterprise Interest Portal on its website. This serves as the primary intake point for organizations looking to move past isolated pilots and into production-grade agentic workflows. The portal is a structured "request for access" form where decision-makers provide: Firmographic Data: Basic details including company size (ranging from startups of 1–50 to large-scale enterprises with 20,000+ employees) and contact information. Business Needs Assessment: A dedicated field for leadership to outline specific business challenges and requirements for "AI coworkers". By submitting this form, enterprises signal their readiness to work directly with OpenAI and AWS teams to implement solutions like multi-system customer support, sales operations, and finance audits that require high-reliability state management. Community and leadership reactions The scale of the announcement was mirrored in the public statements from the key players on social media. Sam Altman, CEO of OpenAI, expressed excitement about the Amazon partnership, specifically highlighting the "stateful runtime environment" and the use of Amazon's custom Trainium chips. However, Altman was quick to clarify the boundaries of the deal: "Our stateless API will remain exclusive to Azure, and we will build out much more capacity with them". Amazon CEO Andy Jassy emphasized the demand from his own customer base, stating, "We have lots of developers and companies eager to run services powered by OpenAI models on AWS". He noted that the collaboration would "change what’s possible for customers building AI apps and agents". Early adopters have already begun to weigh in on the utility of the Frontier approach. Joe Park, EVP at State Farm, noted that the platform is helping the company accelerate its AI capabilities to "help millions plan ahead, protect what matters most, and recover faster". The enterprise decision: where to spend your dollars? For CTOs and enterprise decision-makers, the OpenAI-Amazon-Microsoft triangle creates a new set of strategic choices. The decision of where to allocate budget now depends heavily on the specific use case: For High-Volume, Standard Tasks: If your organization relies on standard API calls for content generation, summarization, or simple chat, Microsoft Azure remains the primary destination. These "stateless" calls are exclusive to Azure, even if they originate from an Amazon-linked collaboration. For Complex, Long-Running Agents: If your goal is to build "AI coworkers" that require deep integration with AWS-hosted data and persistent memory across weeks of work, the AWS Stateful Runtime Environment is the clear choice. For Custom Infrastructure: OpenAI has committed to consuming 2 gigawatts of AWS Trainium capacity to power Frontier and other advanced workloads. This suggests that enterprises looking for the most cost-efficient way to run OpenAI models at massive scale may find an advantage in the AWS-Trainium ecosystem. Licensing, revenue and the Microsoft 'safety net' Despite the massive infusion of Amazon capital, the legal and financial ties between Microsoft and OpenAI remain remarkably rigid. A joint statement released by both companies clarified that their "commercial and revenue share relationship remains unchanged". Crucially, Microsoft continues to maintain its "exclusive license and access to intellectual property across OpenAI models and products". Furthermore, Microsoft will receive a share of the revenue generated by the OpenAI-Amazon partnership. This ensures that while OpenAI is diversifying its infrastructure, Microsoft remains the ultimate beneficiary of OpenAI’s commercial success, regardless of which cloud the compute actually runs on. The definition of Artificial General Intelligence (AGI) also remains a protected term in the Microsoft agreement. The contractual processes for determining when AGI has been reached—and the subsequent impact on commercial licensing—have not been altered by the Amazon deal. Ultimately, OpenAI is positioning itself as more than a model or tool provider; it is an infrastructure player attempting to straddle the two largest clouds on Earth. For the user, this means more choice and more specialized environments. For the enterprise, it means that the era of "one-size-fits-all" AI procurement is over. The choice between Azure and AWS for OpenAI services is now a technical decision about the nature of the work itself: whether your AI needs to simply "think" (stateless) or to "remember and act" (stateful).

Resident Evil has reinvented itself more than almost any franchise in gaming and at one point, it nearly tore itself apart trying.

The post Snapdragon 8 Elite Gen 5 vs Samsung Exynos 2600 Comparison appeared first on Android Headlines .

The Federal Communications Commission has given the go ahead for two of the US' biggest cable providers, Charter Communications and Cox Communications, to merge. Charter announced its intention to acquire Cox for $34.5 billion in May 2025, with specific plans to inherit Cox's managed IT, commercial fiber and cloud businesses, while folding the company's residential cable service into a subsidiary. “By approving this deal, the FCC ensures big wins for Americans," FCC Chairman Brendan Carr said in a statement. "This deal means that jobs are coming back to America that had been shipped overseas. It means that modern, high-speed networks will get built out in more communities across rural America. And it means that customers will get access to lower priced plans. On top of this, the deal enshrines protections against DEI discrimination." The FCC claims that Charter plans to invest "billions" to upgrade its network following the closure of the deal, leading to "faster broadband and lower prices." The company's "Rural Construction Initiative" will also extend those improvements to rural states lacking in consistent internet service, a project the FCC was heavily invested in during the Biden administration, but has been pulling back from since President Donald Trump appointed Carr . The FCC also claims Charter will onshore jobs currently handled off-shore by Cox employees and commit to "new safeguards to protect against DEI discrimination," which essentially amounts to hiring, recruiting and promoting employees based on "skills, qualifications, and experience." While Carr's FCC paints a rosy picture of Charter's acquisition, history has provided multiple examples of mergers having the opposite effect on jobs and pricing. For example, redundancies created when T-Mobile merged with Sprint in 2020 led to a wave of layoffs at the carrier. And funnily enough in 2018, not long after Charter's merger with Time Warner Cable was approved by the FCC , the company raised prices on its Spectrum service by over $91 a year. The FCC's obsession with diversity, equity and inclusion as part of the deal is stranger, if only because it appears to fall outside of the commission's purpose of maintaining fair competition in the telecommunications industry. It does fit with other mergers the FCC has approved under Carr, however. Skydance's acquisition of Paramount was approved in 2025 under the condition it wouldn't establish any DEI programs. This article originally appeared on Engadget at https://www.engadget.com/big-tech/fcc-approves-the-merger-of-cable-giants-cox-and-charter-230258865.html?src=rss

The company said such trades violates its internal company policies about using confidential information for personal gain.

Dina Bass / Bloomberg : Dell stock closes up 22%, its biggest single-day gain since March 1, 2024, after the company gave an outlook for sales of its AI servers that exceeded estimates — Dell Technologies Inc. shares jumped the most in two years after the company gave an outlook for sales of its artificial intelligence servers …

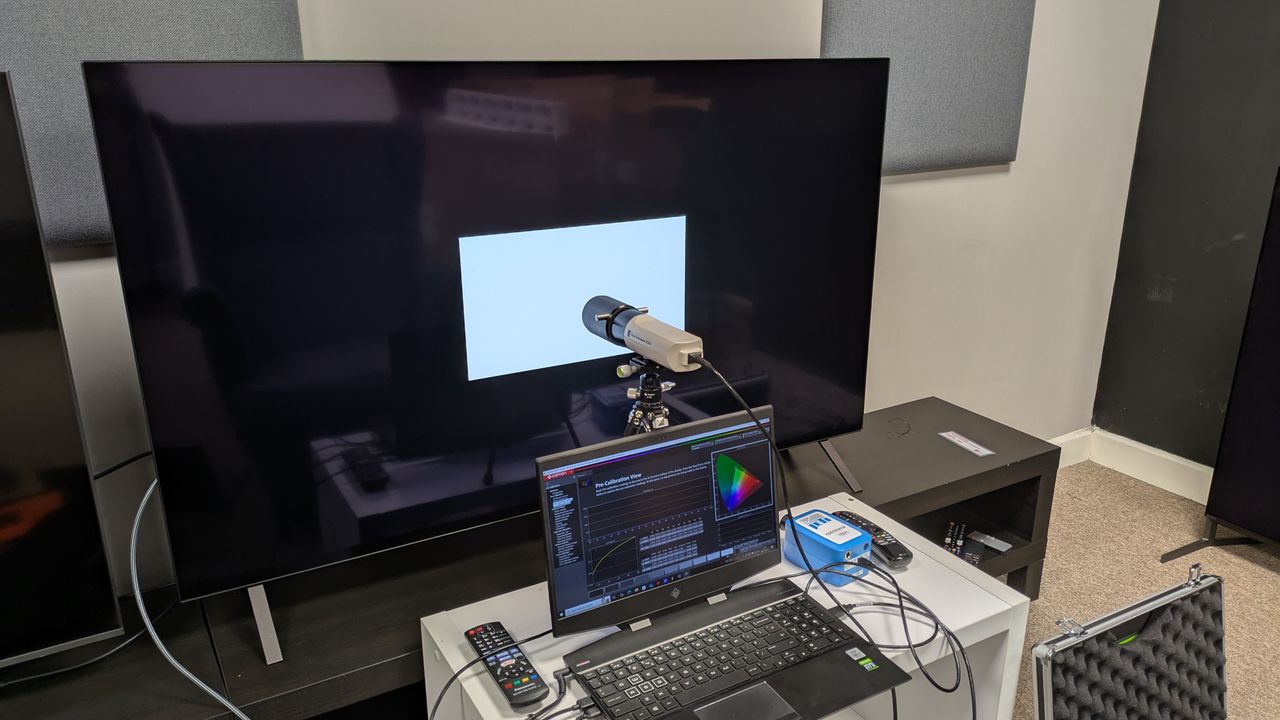

LG Display has been awarded '100% dimming consistency' by a third-party assessor to show that OLEDs are more consistent than LCD

Avoid the dreaded low-battery alert on your devices with this GoCable 8-in-1 EDC 100W Cable, now just $21.99 (reg. $49.99).

I test coffee makers for a living, and these are my top-rated espresso machines for small kitchens.

From season 4 arrives on April 19 – here's everything we know so far, including the sci-fi horror show's confirmed release date, cast, plot rumors, news and more.